Distributed GraphQL with Hasura and AWS RDS Aurora Postgres

Your apps will run faster if the APIs they call are physically located close to your end users. This tutorial will use CloudFlow to deploy the open-source Hasura container to multiple datacenters. We will configure it to use AWS RDS Aurora Postgres as the Postgres database backend.

The Hasura container we will use is available on DockerHub.

note

Before starting, create a new CloudFlow Project and then delete the default Deployment and ingress-upstream Service to prepare the project for your new deployment.

Prerequisites

- You need an account on AWS.

Create a New AWS RDS Aurora Postgress Database

Create a new Postgres instance for Hasura to connect to. For this tutorial we will be using AWS RDS Auroroa Postgres. But any Postgres database will work. Following is guidance on how to complete the various sections of the AWS creation process.

Create database

- In your AWS console, search for RDS.

- Choose "Create database".

- Choose "Standard create".

Engine options

- Engine type is "Amazon Aurora".

- Edition is "Amazon Aurora PostgresSQL-Compatible Edition".

Templates

- Use the "Dev/Test" Template.

Settings

- Accept the DB cluster identifier of "database-1", or whatever it suggests.

- Login ID for the master username should remain as "postgres".

- Choose a strong password.

Instance configuration

- DB instance class: Serverless.

- Capacity range: leave the defaults.

Availability & durability

- Multi-AZ deployment "Don't create an Aurora Replica".

Connectivity

- Network type: IPv4.

- VPC: Default.

- Subject group: default.

- Public access: Yes.

- VPC security group: "Create new".

- New VPC security group name: "hasura" (or any name of your choosing).

- Availability Zone: No Preference.

- Database port (use the default) 5432.

Finishing Up

Ignore the remaining sections of the AWS creation process.

Choose "Create database", and wait several minutes.

Expose Your Database to the Internet

In general, CloudFlow deployments use IP addresses that change as the workload moves according to developer requirements. Because of this, it is not possible to allow/block database traffic by using IP address or range. Hence you should allow connections from all IP addresses. Typically this can be achieved by entering an IP address of (0.0.0.0). While this might be a concern, relying solely on IP for security is generally ineffective and can lead to poor security practices. Be sure to use SSL when connecting to a production database.

When you created your database and made the choice to give public access, AWS gave access only to your own computer's IP address. You will now need to adjust the VPC security group so that all IP addresses are allowed (0.0.0.0), as the Hasura container running in CloudFlow will be contacting the database from many different IP addresses.

- Select the writer instance.

- Select the Connectivity & security tab.

- In the Security section, select the VPC security group, which we called "hasura" above.

- In the Inbound rules tab, you will have one IP address allowed.

- Choose "Edit inbound rules"

- Remove the single IP address and add a new one of "0.0.0.0/0" (Anywhere-IPv4).

- Choose "Save rules".

- Using a different computer (different IP address), test access by telneting to the endpoint,

telnet YOUR-ENDPOINT 5432. If it connects, then you're good.

Get Your Connection String

Your connection string will be of the form postgresql://postgres:[YOUR-PASSWORD]@[YOUR-CLUSTER-ENDPOINT]:5432/postgres. An example (with mock credentials) would look like, postgresql://postgres:abc1234@database-1.cluster-ckremhbvmdf0.us-east-1.rds.amazonaws.com:5432/postgres

- Choose your cluster DB identifier, likely "database-1".

- In the Connectivity & security tab, copy a writer instance endpoint name. Save this string for the Hasura deployment, which is next.

You have just created an empty Postgres database on AWS RDS Aurora. Hasura will populate it with metadata upon first connection.

Create a Deployment for Hasura

Next, create the deployment for Hasura as hasura-deployment.yaml. This will direct CloudFlow to run the Hasura open source container. Substitute YOUR_CONNECTION_STRING accordingly. And supply a Hasura console password in YOUR_ADMIN_PASSWORD.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: hasura

name: hasura

spec:

replicas: 1

selector:

matchLabels:

app: hasura

template:

metadata:

labels:

app: hasura

spec:

containers:

- image: hasura/graphql-engine

imagePullPolicy: Always

name: hasura

resources:

requests:

memory: ".5Gi"

cpu: "500m"

limits:

memory: ".5Gi"

cpu: "500m"

env:

- name: HASURA_GRAPHQL_DATABASE_URL

value: YOUR_CONNECTION_STRING

- name: HASURA_GRAPHQL_ENABLE_CONSOLE

value: "true"

- name: HASURA_GRAPHQL_ADMIN_SECRET

value: YOUR_ADMIN_PASSWORD

Apply this deployment resource to your Project with either the Kubernetes dashboard or kubectl apply -f hasura-deployment.yaml.

Expose the Hasura Console on the Internet

We want to expose the Hasura console on the Internet. Create ingress-upstream.yaml as defined below.

apiVersion: v1

kind: Service

metadata:

labels:

app: ingress-upstream

name: ingress-upstream

spec:

ports:

- name: 80-80

port: 80

protocol: TCP

targetPort: 8080

selector:

app: hasura

sessionAffinity: None

type: ClusterIP

Apply this service resource to your Project with either the Kubernetes dashboard or kubectl apply -f ingress-upstream.yaml.

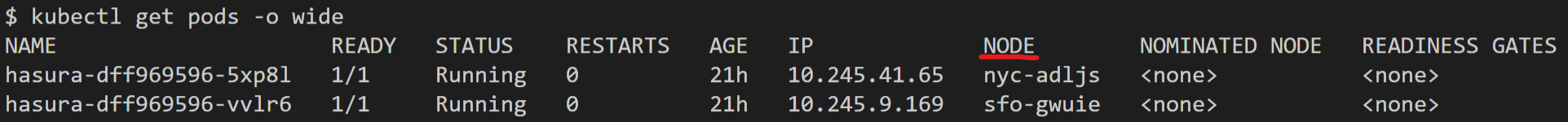

See the pods running on CloudFlow's network using kubectl get pods -o wide.

The -o wide switch shows where your GraphQL API is running according to the default AEE location optimization strategy. Your GraphQL API will be optimally deployed according to traffic.

Finally, follow the instructions that configure DNS and TLS.

Experiment with Hasura

Now, you can start using Hasura. While the main purpose of the Postgres database is to store Hasura metadata, note that you can use the Data section of the Hasura console to create tables of your own in the same Postgress database, and then use Hasura's querying ability to access that data.